Mike's Notes

This is 2 part of a series of 3 posts that reprint articles that cover the crisis in particle physics that have emerged since the failure of scientists at CERN to discover a whole range of new particles as predicted by string theorists.

- Quanta Writers and Editors Discuss Trends in Science and Math (Quanta Magazine)

- A Fight for the Soul of Science (Quanta Magazine)

- Scientific Method: Defend the integrity of physics (Nature)

The article below is a report from a 3-day workshop in Germany. The workshop was held in response to the article in Nature, Scientific Method: Defend the integrity of physics.

Resources

References

- Reference

Repository

- Home > Ajabbi Research > Library >

- Home > Handbook >

Last Updated

11/05/2025

A Fight for the Soul of Science

Senior Writer/Editor

String theory, the multiverse and other ideas of modern physics are potentially untestable. At a historic meeting in Munich, scientists and philosophers asked: should we trust them anyway?

Physicists typically think they “need philosophers and historians of science like birds need ornithologists,” the Nobel laureate David Gross told a roomful of philosophers, historians and physicists last week in Munich, Germany, paraphrasing Richard Feynman.

Physicists George Ellis (center) and Joe Silk (right) at Ludwig Maximilian University in Munich on Dec. 7.

Laetitia Vancon for Quanta MagazineBut desperate times call for desperate measures.

Fundamental physics faces a problem, Gross explained — one dire enough to call for outsiders’ perspectives. “I’m not sure that we don’t need each other at this point in time,” he said.

It was the opening session of a three-day workshop, held in a Romanesque-style lecture hall at Ludwig Maximilian University (LMU Munich) one year after George Ellis and Joe Silk, two white-haired physicists now sitting in the front row, called for such a conference in an incendiary opinion piece in Nature. One hundred attendees had descended on a land with a celebrated tradition in both physics and the philosophy of science to wage what Ellis and Silk declared a “battle for the heart and soul of physics.”

The crisis, as Ellis and Silk tell it, is the wildly speculative nature of modern physics theories, which they say reflects a dangerous departure from the scientific method. Many of today’s theorists — chief among them the proponents of string theory and the multiverse hypothesis — appear convinced of their ideas on the grounds that they are beautiful or logically compelling, despite the impossibility of testing them. Ellis and Silk accused these theorists of “moving the goalposts” of science and blurring the line between physics and pseudoscience. “The imprimatur of science should be awarded only to a theory that is testable,” Ellis and Silk wrote, thereby disqualifying most of the leading theories of the past 40 years. “Only then can we defend science from attack.”

They were reacting, in part, to the controversial ideas of Richard Dawid, an Austrian philosopher whose 2013 book String Theory and the Scientific Method identified three kinds of “non-empirical” evidence that Dawid says can help build trust in scientific theories absent empirical data. Dawid, a researcher at LMU Munich, answered Ellis and Silk’s battle cry and assembled far-flung scholars anchoring all sides of the argument for the high-profile event last week.

David Gross, a theoretical physicist at the University of California, Santa Barbara.Laetitia Vancon for Quanta Magazine

Gross, a supporter of string theory who won the 2004 Nobel Prize in Physics for his work on the force that glues atoms together, kicked off the workshop by asserting that the problem lies not with physicists but with a “fact of nature” — one that we have been approaching inevitably for four centuries.

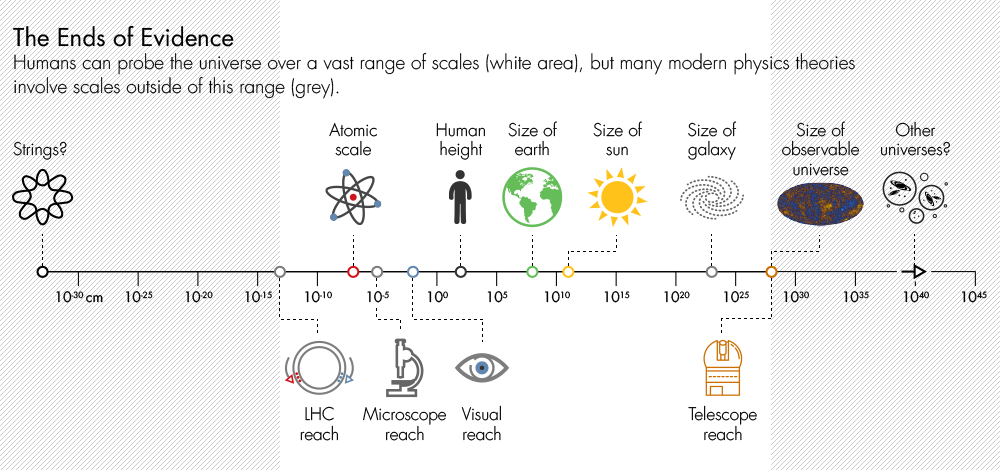

The dogged pursuit of a fundamental theory governing all forces of nature requires physicists to inspect the universe more and more closely — to examine, for instance, the atoms within matter, the protons and neutrons within those atoms, and the quarks within those protons and neutrons. But this zooming in demands evermore energy, and the difficulty and cost of building new machines increases exponentially relative to the energy requirement, Gross said. “It hasn’t been a problem so much for the last 400 years, where we’ve gone from centimeters to millionths of a millionth of a millionth of a centimeter” — the current resolving power of the Large Hadron Collider (LHC) in Switzerland, he said. “We’ve gone very far, but this energy-squared is killing us.”

As we approach the practical limits of our ability to probe nature’s underlying principles, the minds of theorists have wandered far beyond the tiniest observable distances and highest possible energies. Strong clues indicate that the truly fundamental constituents of the universe lie at a distance scale 10 million billion times smaller than the resolving power of the LHC. This is the domain of nature that string theory, a candidate “theory of everything,” attempts to describe. But it’s a domain that no one has the faintest idea how to access.

The problem also hampers physicists’ quest to understand the universe on a cosmic scale: No telescope will ever manage to peer past our universe’s cosmic horizon and glimpse the other universes posited by the multiverse hypothesis. Yet modern theories of cosmology lead logically to the possibility that our universe is just one of many.

Tynan DeBold for Quanta Magazine; Icons via FreepikWhether the fault lies with theorists for getting carried away, or with nature, for burying its best secrets, the conclusion is the same: Theory has detached itself from experiment. The objects of theoretical speculation are now too far away, too small, too energetic or too far in the past to reach or rule out with our earthly instruments. So, what is to be done? As Ellis and Silk wrote, “Physicists, philosophers and other scientists should hammer out a new narrative for the scientific method that can deal with the scope of modern physics.”

“The issue in confronting the next step,” said Gross, “is not one of ideology but strategy: What is the most useful way of doing science?”

Over three mild winter days, scholars grappled with the meaning of theory, confirmation and truth; how science works; and whether, in this day and age, philosophy should guide research in physics or the other way around. Over the course of these pressing yet timeless discussions, a degree of consensus took shape.

Rules of the Game

Throughout history, the rules of science have been written on the fly, only to be revised to fit evolving circumstances. The ancients believed they could reason their way toward scientific truth. Then, in the 17th century, Isaac Newton ignited modern science by breaking with this “rationalist” philosophy, adopting instead the “empiricist” view that scientific knowledge derives only from empirical observation. In other words, a theory must be proved experimentally to enter the book of knowledge.

But what requirements must an untested theory meet to be considered scientific? Theorists guide the scientific enterprise by dreaming up the ideas to be put to the test and then interpreting the experimental results; what keeps theorists within the bounds of science?

Today, most physicists judge the soundness of a theory by using the Austrian-British philosopher Karl Popper’s rule of thumb. In the 1930s, Popper drew a line between science and nonscience in comparing the work of Albert Einstein with that of Sigmund Freud. Einstein’s theory of general relativity, which cast the force of gravity as curves in space and time, made risky predictions — ones that, if they hadn’t succeeded so brilliantly, would have failed miserably, falsifying the theory. But Freudian psychoanalysis was slippery: Any fault of your mother’s could be worked into your diagnosis. The theory wasn’t falsifiable, and so, Popper decided, it wasn’t science.

Paul Teller (by window), a philosopher and professor emeritus at the University of California, Davis.Laetitia Vancon for Quanta Magazine

Critics accuse string theory and the multiverse hypothesis, as well as cosmic inflation — the leading theory of how the universe began — of falling on the wrong side of Popper’s line of demarcation. To borrow the title of the Columbia University physicist Peter Woit’s 2006 book on string theory, these ideas are “not even wrong,” say critics. In their editorial, Ellis and Silk invoked the spirit of Popper: “A theory must be falsifiable to be scientific.”

But, as many in Munich were surprised to learn, falsificationism is no longer the reigning philosophy of science. Massimo Pigliucci, a philosopher at the Graduate Center of the City University of New York, pointed out that falsifiability is woefully inadequate as a separator of science and nonscience, as Popper himself recognized. Astrology, for instance, is falsifiable — indeed, it has been falsified ad nauseam — and yet it isn’t science. Physicists’ preoccupation with Popper “is really something that needs to stop,” Pigliucci said. “We need to talk about current philosophy of science. We don’t talk about something that was current 50 years ago.”

Nowadays, as several philosophers at the workshop said, Popperian falsificationism has been supplanted by Bayesian confirmation theory, or Bayesianism, a modern framework based on the 18th-century probability theory of the English statistician and minister Thomas Bayes. Bayesianism allows for the fact that modern scientific theories typically make claims far beyond what can be directly observed — no one has ever seen an atom — and so today’s theories often resist a falsified-unfalsified dichotomy. Instead, trust in a theory often falls somewhere along a continuum, sliding up or down between 0 and 100 percent as new information becomes available. “The Bayesian framework is much more flexible” than Popper’s theory, said Stephan Hartmann, a Bayesian philosopher at LMU. “It also connects nicely to the psychology of reasoning.”

Gross concurred, saying that, upon learning about Bayesian confirmation theory from Dawid’s book, he felt “somewhat like the Molière character who said, ‘Oh my God, I’ve been talking prose all my life!’”

Another advantage of Bayesianism, Hartmann said, is that it is enabling philosophers like Dawid to figure out “how this non-empirical evidence fits in, or can be fit in.”

Another Kind of Evidence

Dawid, who is 49, mild-mannered and smiley with floppy brown hair, started his career as a theoretical physicist. In the late 1990s, during a stint at the University of California, Berkeley, a hub of string-theory research, Dawid became fascinated by how confident many string theorists seemed to be that they were on the right track, despite string theory’s complete lack of empirical support. “Why do they trust the theory?” he recalls wondering. “Do they have different ways of thinking about it than the canonical understanding?”

String theory says that elementary particles have dimensionality when viewed close-up, appearing as wiggling loops (or “strings”) and membranes at nature’s highest zoom level. According to the theory, extra dimensions also materialize in the fabric of space itself. The different vibrational modes of the strings in this higher-dimensional space give rise to the spectrum of particles that make up the observable world. In particular, one of the vibrational modes fits the profile of the “graviton” — the hypothetical particle associated with the force of gravity. Thus, string theory unifies gravity, now described by Einstein’s theory of general relativity, with the rest of particle physics.

Laetitia Vancon for Quanta Magazine

However string theory, which has its roots in ideas developed in the late 1960s, has made no testable predictions about the observable universe. To understand why so many researchers trust it anyway, Dawid signed up for some classes in philosophy of science, and upon discovering how little study had been devoted to the phenomenon, he switched fields.

In the early 2000s, he identified three non-empirical arguments that generate trust in string theory among its proponents. First, there appears to be only one version of string theory capable of achieving unification in a consistent way (though it has many different mathematical representations); furthermore, no other “theory of everything” capable of unifying all the fundamental forces has been found, despite immense effort. (A rival approach called loop quantum gravity describes gravity at the quantum scale, but makes no attempt to unify it with the other forces.) This “no-alternatives” argument, colloquially known as “string theory is the only game in town,” boosts theorists’ confidence that few or no other possible unifications of the four fundamental forces exist, making it more likely that string theory is the right approach.

Second, string theory grew out of the Standard Model — the accepted, empirically validated theory incorporating all known fundamental particles and forces (apart from gravity) in a single mathematical structure — and the Standard Model also had no alternatives during its formative years. This “meta-inductive” argument, as Dawid calls it, buttresses the no-alternatives argument by showing that it has worked before in similar contexts, countering the possibility that physicists simply aren’t clever enough to find the alternatives that exist.

Emily Fuhrman for Quanta Magazine, with text by Natalie Wolchover and art direction by Olena Shmahalo.

The third non-empirical argument is that string theory has unexpectedly delivered explanations for several other theoretical problems aside from the unification problem it was intended to address. The staunch string theorist Joe Polchinski of the University of California, Santa Barbara, presented several examples of these “unexpected explanatory interconnections,” as Dawid has termed them, in a paper read in Munich in his absence. String theory explains the entropy of black holes, for example, and, in a surprising discovery that has caused a surge of research in the past 15 years, is mathematically translatable into a theory of particles, such as the theory describing the nuclei of atoms.

Polchinski concludes that, considering how far away we are from the exceptionally fine grain of nature’s fundamental distance scale, we should count ourselves lucky: “String theory exists, and we have found it.” (Polchinski also used Dawid’s non-empirical arguments to calculate the Bayesian odds that the multiverse exists as 94 percent — a value that has been ridiculed by the Internet’s vocal multiverse critics.)

One concern with including non-empirical arguments in Bayesian confirmation theory, Dawid acknowledged in his talk, is “that it opens the floodgates to abandoning all scientific principles.” One can come up with all kinds of non-empirical virtues when arguing in favor of a pet idea. “Clearly the risk is there, and clearly one has to be careful about this kind of reasoning,” Dawid said. “But acknowledging that non-empirical confirmation is part of science, and has been part of science for quite some time, provides a better basis for having that discussion than pretending that it wasn’t there, and only implicitly using it, and then saying I haven’t done it. Once it’s out in the open, one can discuss the pros and cons of those arguments within a specific context.”

The Munich Debate

Laetitia Vancon for Quanta Magazine

The trash heap of history is littered with beautiful theories. The Danish historian of cosmology Helge Kragh, who detailed a number of these failures in his 2011 book, Higher Speculations, spoke in Munich about the 19th-century vortex theory of atoms. This “Victorian theory of everything,” developed by the Scots Peter Tait and Lord Kelvin, postulated that atoms are microscopic vortexes in the ether, the fluid medium that was believed at the time to fill space. Hydrogen, oxygen and all other atoms were, deep down, just different types of vortical knots. At first, the theory “seemed to be highly promising,” Kragh said. “People were fascinated by the richness of the mathematics, which could keep mathematicians busy for centuries, as was said at the time.” Alas, atoms are not vortexes, the ether does not exist, and theoretical beauty is not always truth.

Except sometimes it is. Rationalism guided Einstein toward his theory of relativity, which he believed in wholeheartedly on rational grounds before it was ever tested. “I hold it true that pure thought can grasp reality, as the ancients dreamed,” Einstein said in 1933, years after his theory had been confirmed by observations of starlight bending around the sun.

The question for the philosophers is: Without experiments, is there any way to distinguish between the non-empirical virtues of vortex theory and those of Einstein’s theory? Can we ever really trust a theory on non-empirical grounds?

In discussions on the third afternoon of the workshop, the LMU philosopher Radin Dardashti asserted that Dawid’s philosophy specifically aims to pinpoint which non-empirical arguments should carry weight, allowing scientists to “make an assessment that is not based on simplicity, which is not based on beauty.” Dawidian assessment is meant to be more objective than these measures, Dardashti explained — and more revealing of a theory’s true promise.

Gross said Dawid has “described beautifully” the strategies physicists use “to gain confidence in a speculation, a new idea, a new theory.”

“You mean confidence that it’s true?” asked Peter Achinstein, an 80-year-old philosopher and historian of science at Johns Hopkins University. “Confidence that it’s useful? confidence that …”

“Let’s give an operational definition of confidence: I will continue to work on it,” Gross said.

“That’s pretty low,” Achinstein said.

“Not for science,” Gross said. “That’s the question that matters.”

Kragh pointed out that even Popper saw value in the kind of thinking that motivates string theorists today. Popper called speculation that did not yield testable predictions “metaphysics,” but he considered such activity worthwhile, since it might become testable in the future. This was true of atomic theory, which many 19th-century physicists feared would never be empirically confirmed. “Popper was not a naive Popperian,” Kragh said. “If a theory is not falsifiable,” Kragh said, channeling Popper, “it should not be given up. We have to wait.”

But several workshop participants raised qualms about Bayesian confirmation theory, and about Dawid’s non-empirical arguments in particular.

Carlo Rovelli, a proponent of loop quantum gravity (string theory’s rival) who is based at Aix-Marseille University in France, objected that Bayesian confirmation theory does not allow for an important distinction that exists in science between theories that scientists are certain about and those that are still being tested. The Bayesian “confirmation” that atoms exist is essentially 100 percent, as a result of countless experiments. But Rovelli says that the degree of confirmation of atomic theory shouldn’t even be measured in the same units as that of string theory. String theory is not, say, 10 percent as confirmed as atomic theory; the two have different statuses entirely. “The problem with Dawid’s ‘non-empirical confirmation’ is that it muddles the point,” Rovelli said. “And of course some string theorists are happy of muddling it this way, because they can then say that string theory is ‘confirmed,’ equivocating.”

The German physicist Sabine Hossenfelder, in her talk, argued that progress in fundamental physics very often comes from abandoning cherished prejudices (such as, perhaps, the assumption that the forces of nature must be unified). Echoing this point, Rovelli said “Dawid’s idea of non-empirical confirmation [forms] an obstacle to this possibility of progress, because it bases our credence on our own previous credences.” It “takes away one of the tools — maybe the soul itself — of scientific thinking,” he continued, “which is ‘do not trust your own thinking.’”

The Munich proceedings will be compiled and published, probably as a book, in 2017. As for what was accomplished, one important outcome, according to Ellis, was an acknowledgment by participating string theorists that the theory is not “confirmed” in the sense of being verified. “David Gross made his position clear: Dawid’s criteria are good for justifying working on the theory, not for saying the theory is validated in a non-empirical way,” Ellis wrote in an email. “That seems to me a good position — and explicitly stating that is progress.”

In considering how theorists should proceed, many attendees expressed the view that work on string theory and other as-yet-untestable ideas should continue. “Keep speculating,” Achinstein wrote in an email after the workshop, but “give your motivation for speculating, give your explanations, but admit that they are only possible explanations.”

“Maybe someday things will change,” Achinstein added, “and the speculations will become testable; and maybe not, maybe never.” We may never know for sure the way the universe works at all distances and all times, “but perhaps you can narrow the live possibilities to just a few,” he said. “I think that would be some progress.”

Reprinted from The Atlantic